The Fraud Problem Reshaping Survey Research

Author

Executive Director and Senior Vice President, AmeriSpeak

AmeriSpeak® is NORC’s nationally representative, probability-based survey panel of U.S. households. Find out more.

February 2026

Survey fraud is accelerating, and its impact is structural, not incidental, raising urgent questions about data quality and survey design.

By now, Sean Westwood’s 2025 article on artificial intelligence (AI) fraud in nonprobability surveys has permeated the survey research industry. Most major nonprobability panel providers, including YouGov, VeraSight, Dynata, and Cloud Research, have reacted swiftly to assure the community they have equitable solutions to the problem and that, indeed, it may be a problem with other panels but not with their own. That may be true, though full transparency and rigorous research to back up these claims has been, to date, absent.

What often gets overlooked in these discussions is that fraud isn’t just a technological issue, it’s structural. Nonprobability systems were built for speed and scale, not identity verification. When a system works not as invitation-only but as an open bar, fraud becomes a feature of the system, not a glitch.

This distinction matters: if identity is not known a priori, no amount of downstream cleaning fully solves the problem. This is one of the central themes we’ve seen across the research community, including in recent research our team published demonstrating why nonprobability survey samples can be a dangerous gamble.

The Scale of the Problem

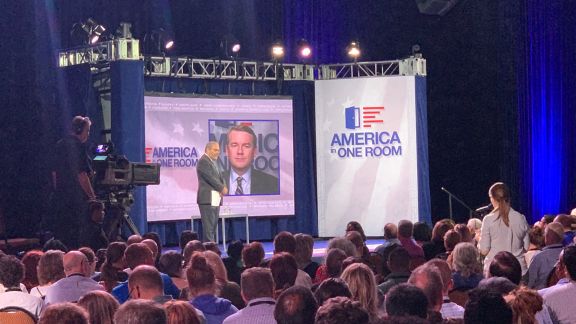

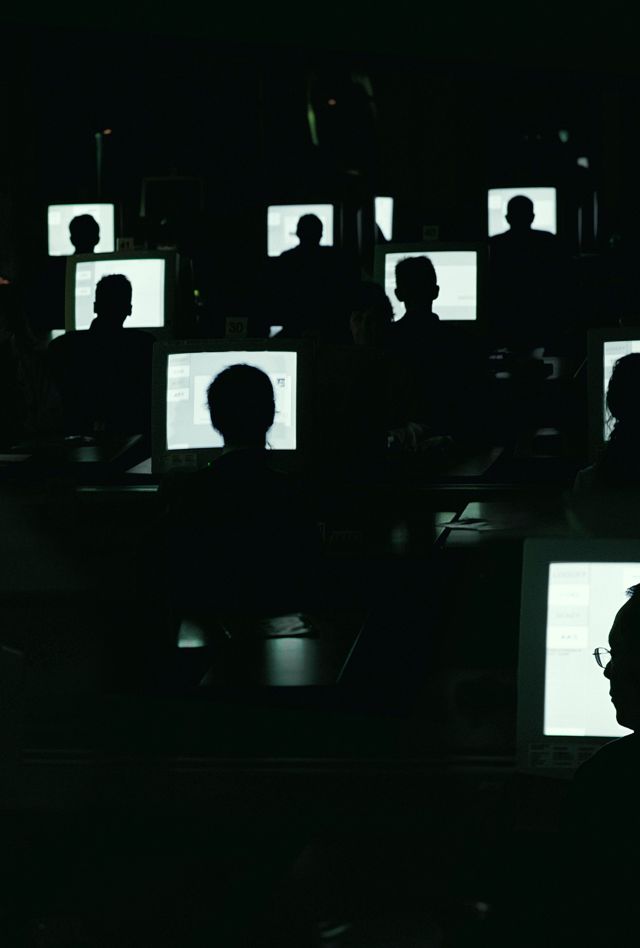

According to the most recent Insights Association webinar, “The ‘Existential’ Threat of AI Agents to Survey Research: Understanding the Problem and How to Solve It” (January 29, 2026), the majority of fraud today is human click farm-based (operations that employ low-paid workers using rows of devices to artificially generate high volumes of online interactions). AI fraud is perhaps still in its infancy.

The presentation claimed that 40 percent of nonprobability interviews in 2025 were likely fraudulent. That amounts to about 2 billion fraudulent interviews. Since most of these are human-based, the solutions noted in this webinar and others are the use of verification and trap questions. They suggested that trap questions are generally effective against low‑engagement human respondents but far less effective for individuals who know English well enough and are paying just enough attention to answer them correctly.

Verification can take many forms, but notably, most vendors are unable to verify everyone (not everyone responds to a Zoom request, exists on voter registration records, etc.). Moreso, verification seems to occur only on a core panel. For many surveys, particularly those with a large quota for small-incidence groups, a vendor will go off-panel to attain the final quota. It is perhaps for this reason that the Pew Research Center and others find that harder-to-reach populations tend to have the most untenable responses.

Why Fraud Behaves Like Bias

It’s also critical to understand that fraud isn’t simply “more error.” It behaves like bias. Fraudulent respondents cluster in predictable ways: patterns of speeders, language inconsistencies, duplicated demographic profiles, and improbable behavioral claims. These fraudulent respondents distort the substantive findings of studies, particularly on subgroup analyses, trend lines, and small-incidence populations. Treating fraud as random error dramatically understates its impact.

The Insights webinar did note at least one effective method for AI detection and mentioned that firms have up to a dozen other methods. There is evidence that these AI identification methods are highly effective (for now), but while larger panel companies can afford to deploy these methods, smaller and homemade nonprobability survey efforts probably cannot.

It is likely true that our AI detection methods are becoming increasingly effective. But two notes of concern persist: First, human fraud remains the predominant form of fraud, and detection of that seems to be ineffective to some degree, given the roughly 40 percent fraud rate. Second, as the presentation noted, with AI fraud, we are just entering what will be a long, protracted war of attrition, with one side innovating new ways to convince us that they are a “real human,” and the other side finding innovative ways to discover the truth.

The Probability-Based Alternative

No single tool solves fraud, but robust systems do. Larger providers may deploy variations of these methods, but the real differentiator is whether identity is verified before respondents enter the ecosystem.

This is why probability-based recruitments remain inoculated against fraud. When respondents originate from verified physical addresses and are recruited through controlled, documented methods, the issue of fraud rightly never even enters the conversation. Open opt-in models simply cannot replicate this foundational protection.

For a deeper look at the structural risks within nonprobability sampling, and why fraud is a symptom of more fundamental design issues, I point you again to the research our team did on the dangerous gamble of nonprobability samples. It expands on many of the themes I raise and provides a framework for evaluating sample quality.

Fraud is not a temporary disruption—it’s a defining challenge for nonprobability surveys. The question is no longer whether fraud exists; it’s which methodological foundation you choose to stand on.

Main Takeaways

- Fraud in nonprobability surveys is both widespread and structurally rooted.

- Probability-based recruitment inherently limits fraud exposure.

- Fraud is not a passing issue; it is now a defining challenge for survey research.

Suggested Citation

Dutwin, D. (2026, February 25). The Fraud Problem Reshaping Survey Research. [Web blog post]. NORC at the University of Chicago. Retrieved from www.norc.org.